December 9th, 2010 — Data management

Big data has been one of the big topics of the year in terms of client queries coming into The 451 Group, and one of the recurring questions (especially from vendors and investors) has been: “how big is the big data market?”

The only way to answer that is to ask another question: “what do you mean by ‘big data’?” We have mentioned before that the term is ill-defined, so it is essential to work out what an individual means when they use the term.

In our experience they usually mean one of two things:

- Big data as a subset of overall data: specific volumes or classes of data that cannot be processed or analyzed by traditional approaches

- Big data as a superset of the entire data management market, driven by the ever-increasing volume and complexity of data

Our perspective is that big data, if it means anything at all, represents a subset of overall data. However, it is not one that can be measurably defined by the size of the data volume. Specifically, as we recently articulated, we believe:

“Big data is a term applied to data sets that are large, complex and dynamic (or a combination thereof) and for which there is a requirement to capture, manage and process the data set in its entirety, such that it is not possible to process the data using traditional software tools and analytic techniques within tolerable time frames.”

The confusion around the term big data also partly explains why we introduced the term “total data” to refer to a broader approach to data management, managing the storage and processing of all available data to deliver the necessary business intelligence.

The distinction is clearly important when it comes to sizing the potential opportunity. I recently came across a report from one of the big banks that put a figure on what it referred to as the “big data market”. However, they had used the superset definition.

The result was therefore not a calculation of the big data market, but a calculation of the total data management sector (although the method is in itself too simplistic for us to endorse the end result) since the approach taken was to add together the revenue estimates for all data management technologies – traditional and non-traditional.

.

.

Specifically, the bank had added up current market estimates for database software, storage and servers for databases, BI and analytics software, data integration, master data management, text analytics, database-related cloud revenue, complex event processing and NoSQL databases.

In comparison, the big data market is clearly a lot smaller, and represents a subset of revenue from traditional and non-traditional data management technologies, with a leaning towards the non-traditional technologies.

It is important to note, however, that big data cannot be measurably defined by the technology used to store and process it. As we have recently seen, not every use case for Hadoop or a NoSQL database – for example – involves big data.

Clearly this is a market that is a lot smaller than the one calculated by the bank, and the calculation required is a lot more complicated. We know, for example, that Teradata generated revenue of $489m in its third quarter. How much of that was attributable to big data?

Answering that requires a stricter definition of big data than is currently in usage (by anyone). But as we have noted above, ‘big data’ cannot be defined by data volume, or the technology used to store or process it.

There’s a lot of talk about the “big data problem”. The biggest problem with big data, however, is that the term has not – and arguably cannot – be defined in any measurable way.

How big is the big data market? You may as well ask “how long is a piece of string?”

If we are to understand the opportunity for storing and processing big data sets then the industry needs to get much more specific about what it is that is being stored and processed, and what we are using to store and process it.

December 6th, 2010 — Data management

The 451 Group has recently published a spotlight report examining the trends that we see shaping the data management segment, including data volume, complexity, real-time processing demands and advanced analytics, as well as a perspective that no longer treats the enterprise data warehouse as the only source of trusted data for generating business intelligence.

The report examines these trends and introduces the term ‘total data’ to describe the total opportunity and challenge provided by new approaches to data management.

Johann Cruyff, exponent of total football, inspiration for total data. Source: Wikimedia. Attribution: Bundesarchiv, Bild 183-N0716-0314 / Mittelstädt, Rainer / CC-BY-SA

Total data is not simply another term for big data; it describes a broader approach to data management, managing the storage and processing of big data to deliver the necessary BI.

Total data involves processing any data that might be applicable to the query at hand, whether that data is structured or unstructured, and whether it resides in the data warehouse, or a distributed Hadoop file system, or archived systems, or any operational data source – SQL or NoSQL – and whether it is on-premises or in the cloud.

In the report we explain how total data is influencing modern data management with respect to four key trends. To summarize:

- beyond big: total data is about processing all your data, regardless of the size of the data set

- beyond data: total data is not just about being able to store data, but the delivery of actionable results based on analysis of that data

- beyond the data warehouse: total data sees organisations complementing data warehousing with Hadoop, and its associated projects

- beyond the database: total data includes the emergence of private data clouds, and the expansion of data sources suitable for analytics beyond the database

The term ‘total data’ is inspired ‘total football,’ the soccer tactic that emerged in the early 1970s and enabled Ajax of Amsterdam to dominate European football in the early part of the decade and The Netherlands to reach the finals of two consecutive World Cups, having failed to qualify for the four preceding competitions.

Unlike previous approaches that focused on each player having a fixed role to play, total football encouraged individual players to switch positions depending on what was happening around them while ensuring that the team as a whole fulfilled all the required tactical positions.

Although total data is not meant to be directly analogous to total football, we do see a connection with the latter’s fluidity that is enabled by no longer requiring players to fulfill specific roles, and total data’s desire to break down dependencies on the enterprise data warehouse as the single version of the truth, while letting go of assumptions that the relational database offers a one-size-fits-all answer to data management.

Total data is about more than data volumes. It’s about taking a broad view of available data sources and processing and analytic technologies, bringing in data from multiple sources, and having the flexibility to respond to changing business requirements.

A more substantial explanation of the concept of total data and its impact on information and infrastructure management methods and technologies is available here for 451 Group clients. Non-clients can also apply for trial access.

November 24th, 2010 — Data management

I was just looking through some slides handed to me by Cloudera’s John Kreisa and Mike Olson during our meeting last week and one of them jumped out at me.

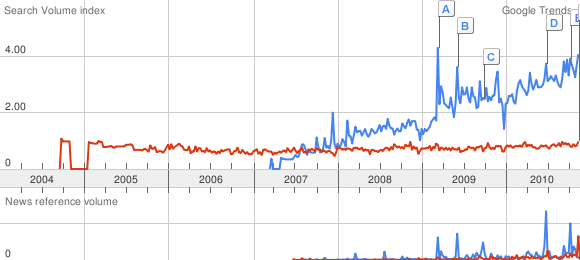

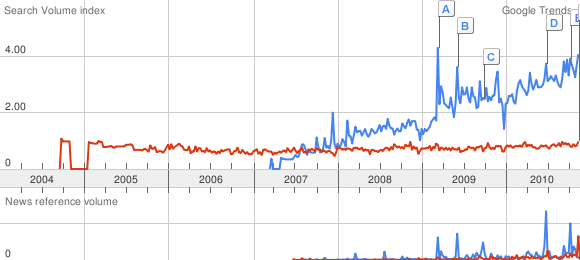

It contains a graphic showing a Google Trends result for searches for Hadoop and “Big Data”. See for yourself why the graphic stood out:

In case you hadn’t guessed, Hadoop is in blue and “Big Data” is in red.

Even taken with a pinch of salt it’s a huge validation of the level of interest in Hadoop. Removing the quotes to search for Big Data (red) doesn’t change the overall picture.

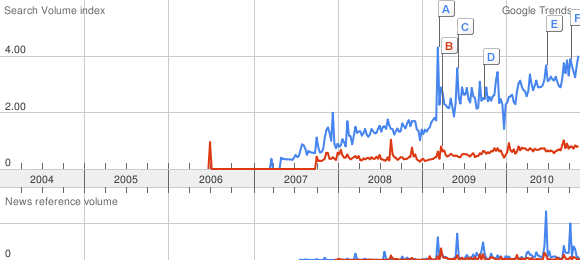

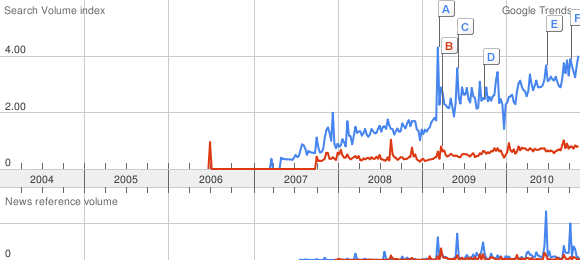

See also Hadoop (blue) versus MapReduce (red).

UPDATE: eBay’s Oliver Ratzesberger puts the comparisons above in perspective somewhat by comparing Joomla vx Hadoop.

November 18th, 2010 — Data management

A number of analogies have arisen in recent years to describe the importance of data and its role in shaping new business models and business strategies. Among these is the concept of the “data factory”, recently highlighted by Abhi Mehta of Bank of America to describe businesses that have realized that their greatest asset is data.

WalMart, Google and Facebook are good examples of data factories, according to Mehta, who is working to ensure that BofA joins the list as the first data factory for financial services.

Mehta lists three key concepts that are central to building a data factory:

- Believe that your core asset is data

- Be able to automate the data pipeline

- Know how to monetize your data assets

The idea of the data factory is useful in describing the organizations that we see driving the adoption of new data management, management and analytics concepts (Mehta has also referred to this as the “birth of the next industrial revolution”) but it has some less useful connotations.

In particular, the focus on data as something that is produced or manufactured encourages the obsession with data volume and capacity that has put the Big in Big Data.

Size isn’t everything, and the ability to store vast amounts of data is only really impressive if you also have the ability to process and analyze that data and gain valuable business insight from it.

While the focus in 2010 has been on Big Data, we expect the focus to shift in 2011 towards big data analytics. While the data factory concept describes what these organizations are, it does not describe what it is that they do to gain analytical insight from their data.

Another analogy that has been kicking around for a few years is the idea of data as the new oil. There are a number of parallels that can be drawn between oil and gas companies exploring the landscape in search of pockets of crude, and businesses exploring their data landscape in search of pockets of useable data.

A good example of this is eBay’s Singularity platform for deep contextual analysis, one use of which was to combined transactional data from the company’s data warehouse with behavioural data on its buyers and sellers, and enabled identification of top sellers, driving increased revenue from those sellers.

By exploring information from multiple sources in a single platform the company was able to gain a better perspective over its data than would be possible using data sampling techniques, revealing a pocket of data that could be used to improve business performance.

However, exploring data within the organization is only scratching the surface of what eBay has achieved. The real secret to eBay’s success has been in harnessing that retail data in the first place.

This is a concept I have begun to explore recently in the context of turning data into products. It occurs to me that the companies that represent the most success in this regard are those that are not producing data, but harnessing naturally occurring information streams to capture the raw data that can be turned into usable data via analytics.

There is perhaps no greater example of this than Facebook, now home to over 500 million people using it to communicate, share information and photos, and join groups. While Facebook is often cited as an example of new data production,, that description is inaccurate.

Consider what these 500 million people did before Facebook. The answer, of course, is that they communicated, shared information and photos, and joined groups. The real genius of Facebook is that it harnesses a naturally occurring information stream and accelerates it.

Natural sources of data are everywhere, from the retail data that has been harnessed by the likes of eBay and Amazon, to the Internet search data that has been harnessed by Google, but also the data being produced by the millions of sensors in manufacturing facilities, data centres and office buildings around the world.

Harnessing that data is the first problem to solve, applying the data analytics techniques to that, automating the data pipeline, and knowing how to monetize the data assets completes the picture.

Mike Loukides of O’Reilly recently noted: “the future belongs to companies and people that turn data into products.” The companies and people that stand to gain the most are not those who focus on data as something to be produced and stockpiled, but as a natural energy source to be processed and analyzed.

November 12th, 2010 — Data management

CouchOne has become the first of the major NoSQL database vendors to publicly distance itself from the term NoSQL, something we have been expecting for some time.

While the term NoSQL enabled the likes of 10gen, Basho, CouchOne, Membase, Neo Technologies and Riptano to generate significant attention for their various database projects/products it was always something of a flag of convenience.

Somewhat less convenient is the fact that grouping the key-value, document, graph and column family data stores together under the NoSQL banner masked their differentiating features and potential use cases.

As Mikael notes in the post: “The term ‘NoSQL’ continues to lump all the companies together and drowns out the real differences in the problems we try to tackle and the challenges we face.”

It was inevitable, therefore, that as the products and vendors matured the focus would shift towards specific use cases and the NoSQL movement would fragment.

CouchOne is by no means the only vendor thinking about distancing itself from NoSQL, especially since some of them are working on SQL interfaces. Again, we would see this fragmentation as a sign of maturity, rather than crisis.

The ongoing differentiation is something we plan to cover in depth with a report looking at the specific use cases of the “database alternatives” early in 2011.

It is also interesting that CouchOne is distancing itself from NoSQL in part due to the conflation of the term with Big Data. We have observed this ourselves and would agree that it is a mistake.

While some of the use cases for some of the NoSQL databases do involve large distributed data sets not all of them do, and we had noted that the launch of the CouchOne Mobile development environment was designed to play to the specific strengths of Apache CouchDB: peer-based bidirectional replication, including disconnected mode, and a crash-only design.

Incidentally, Big Data is another term we expect to diminish in usage in 2011, since Bigdata is a trademark of a company called SYSTAP.

Witness the fact that the Data Analytics Summit, which I’ll be attending next week, was previously the Big Data Summit. We assume that is also the reason Big Data News has been upgraded to Massive Data News.

The focus on big data sets and solving big data problems will continue, of course, but expect much less use of Big Data as a brand.

Similarly, while we expect many of the “NoSQL” databases have a bright future, expect much less focus on the term NoSQL.

November 10th, 2010 — Data management

Next week I will be attending and giving a presentation at the Data Analytics Summit in New York. The event has been put together by Aster Data to discuss advancements in big data management and analytic processing.

I’ll be providing an introductory overview with a “motivational” guide to big data analytics, having some fun with some of the clichés involved with big data, and also presenting our view of the main trends driving business and technological innovation in data analytics.

I’ll also be introducing some thoughts about the emergence of new business models based on turning data into new product opportunities, examining the idea of the data factory, and “data as the new oil”, as a well as data as a renewable energy source.

The event will also include presentations from Aster Data, as well as Barnes & Noble, comScore, Amazon Web Services, Sungard, MicroStrategy and Dell, with tracks focusing on financial services and Internet and retail.

For more details about the event, and to register, visit http://www.dataanalyticssummit.com/2010/nyc/.

Subscribe via RSS

Subscribe via RSS